Introduction

Quantum Boltzmann Machines (QBMs) are quantum extensions of classical Boltzmann Machines, which are energy-based probabilistic models used for unsupervised learning. Unlike classical versions, QBMs leverage quantum superposition and entanglement to represent complex probability distributions more efficiently.

They are particularly powerful for:

- Sampling complex distributions faster than classical methods

- Feature extraction for deep learning

- Optimization tasks where energy-based modeling is crucial

Mathematical Foundation

A classical Boltzmann Machine defines the probability of a state x as:

where:

- \(E(x)\) = Energy of state x

- \(Z=∑xe−E(x)\) = Partition function (normalization factor)

In a Quantum Boltzmann Machine, the energy function is replaced with a Hamiltonian H:\[ρ=e−βHZ\]

where:

- \(ρ \)= density matrix (quantum probability distribution)

- \(β\) = inverse temperature parameter

- \(H\) = Hamiltonian encoding weights and biases

- \(Z=Tr(e−βH) \)= quantum partition function

This allows the QBM to model quantum probability distributions instead of classical ones.

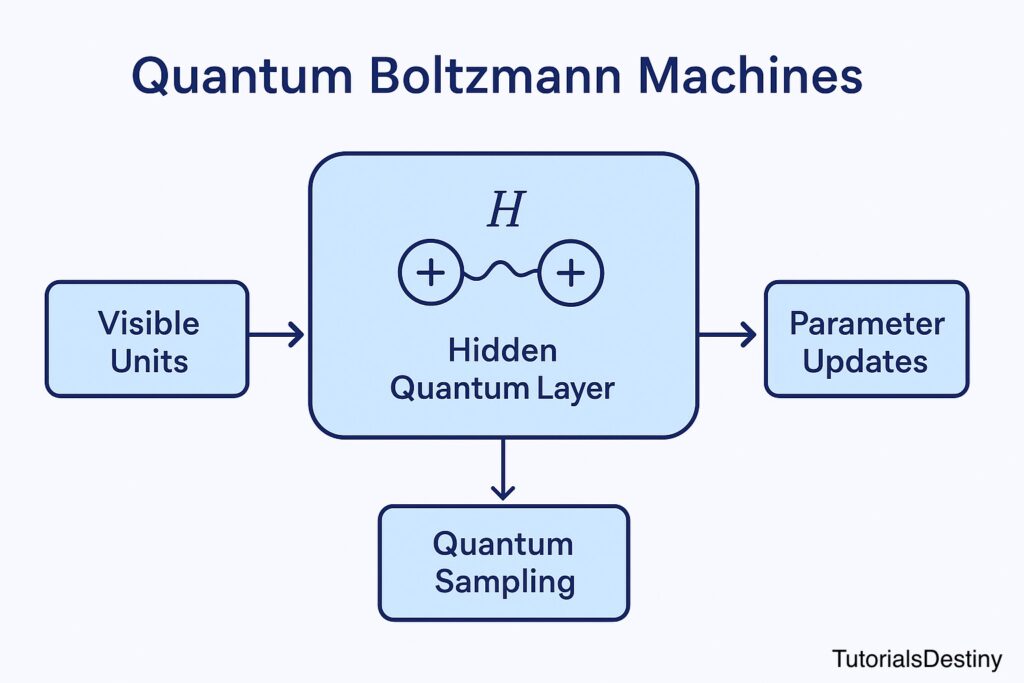

Structure of a QBM

- Visible Units (classical input/output layer): Encodes observed data

- Hidden Units (quantum layer): Encodes latent features using qubits

- Hamiltonian (Energy Function): Defines interactions (weights + biases) between visible and hidden units

- Quantum Sampling: Uses quantum mechanics to sample probability distributions more efficiently

Workflow of Training a QBM

- Initialize Hamiltonian parameters (weights + biases)

- Encode visible units (data) into qubits

- Evolve system under Hamiltonian → obtain quantum state

- Sample states using quantum measurement

- Update parameters via gradient-based optimization

- Repeat until convergence (energy minimized)

Applications of QBMs

✅ Quantum-enhanced generative models – learning distributions from data

✅ Quantum optimization – solving NP-hard problems faster

✅ Quantum-inspired deep learning – acting as hidden layers for QNNs

✅ Drug discovery & materials science – sampling molecular distributions

Visual Diagram

👉 A workflow diagram of QBMs showing:

- Visible layer (input)

- Hidden quantum layer (qubits + Hamiltonian)

- Quantum sampling + parameter updates

➡️ Next: Hybrid Quantum–Classical Algorithms