Introduction: A New Way to Build Software

A few years ago, if someone told you that you could build an app without writing much code, it would have sounded unrealistic. Programming was always seen as a technical skill—something that required years of practice, memorizing syntax, and solving complex problems.

But things are changing fast.

Today, a new approach called vibe coding is transforming how people create software. Instead of focusing on writing every line of code manually, developers—and even beginners—are now building projects by simply describing what they want.

This shift is not just about convenience. It represents a fundamental change in how we think about programming itself.

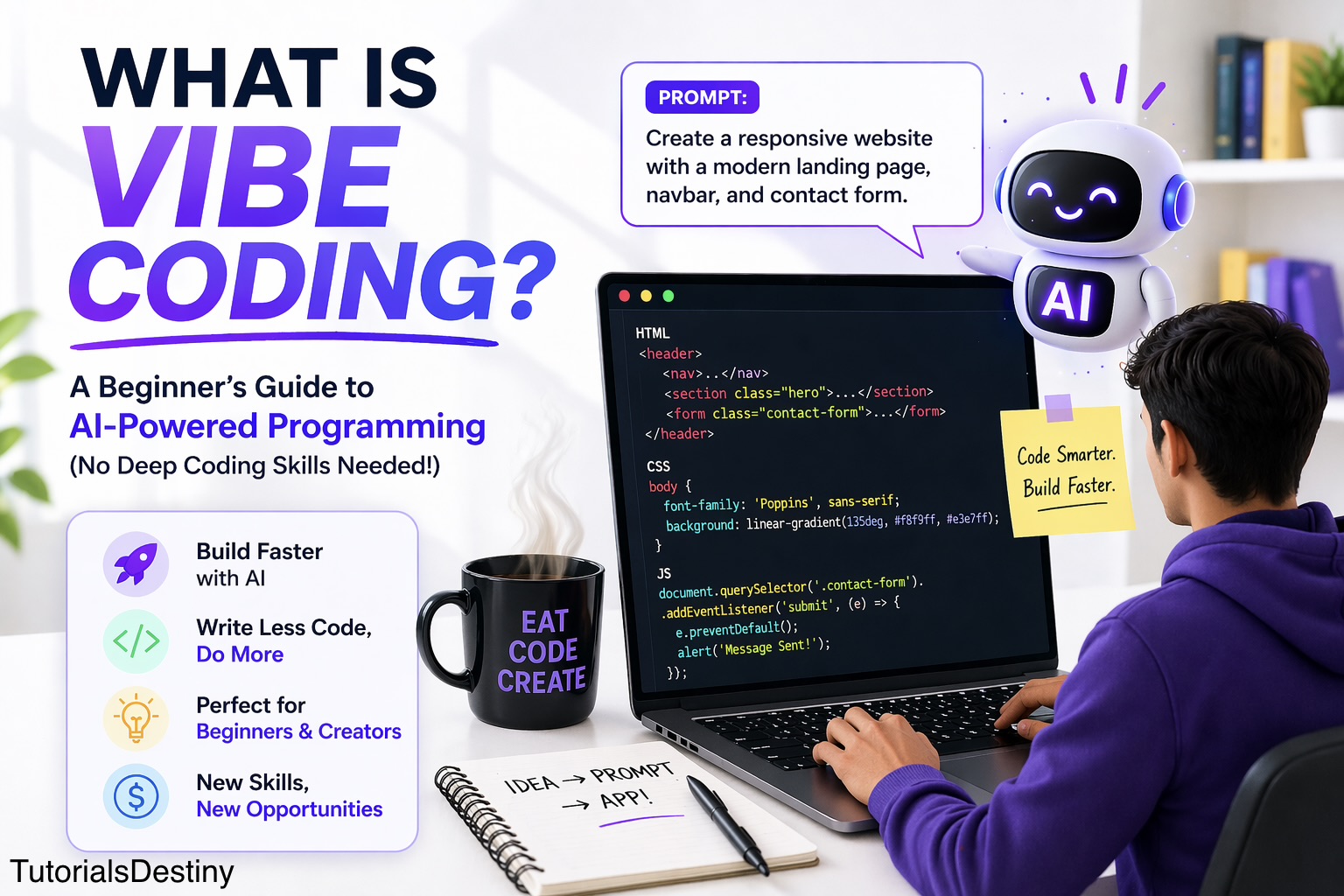

So, What Exactly is Vibe Coding?

At its core, vibe coding is about communicating your intent rather than manually constructing code.

In traditional programming, you would sit down and carefully write instructions in a specific language like Python or JavaScript. Every bracket, every semicolon, every function matters. The process is precise but often time-consuming.

With vibe coding, the process feels different. You describe your idea in plain language, and an AI system translates that idea into working code.

For example, instead of writing a loop yourself, you might simply say:

“Create a program that prints numbers from 1 to 10.”

Within seconds, the AI generates the solution.

What makes this powerful is not just the speed, but the accessibility. People who once felt intimidated by coding are now able to build real projects.

Why Vibe Coding is Suddenly Everywhere

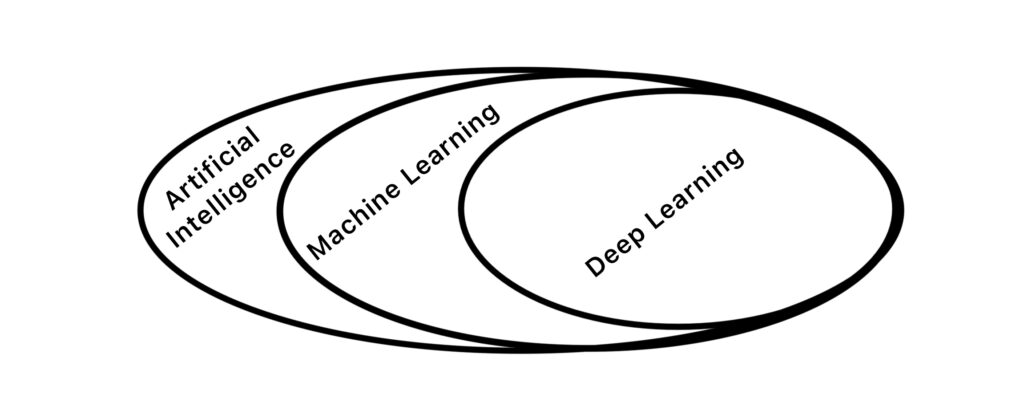

The rise of vibe coding didn’t happen overnight. It is the result of rapid advancements in artificial intelligence, especially in systems that understand both human language and programming logic.

These AI tools are trained on massive amounts of code and text. Over time, they learn patterns—how developers solve problems, how applications are structured, and how instructions in English can be mapped to actual code.

This is why modern tools can take a simple sentence and turn it into a working application.

But beyond the technology, there’s another reason vibe coding is growing so fast: people want faster results.

In today’s world, speed matters. Whether you are a student, entrepreneur, or developer, the ability to quickly turn ideas into reality is incredibly valuable.

From Writing Code to Shaping Ideas

One of the most interesting aspects of vibe coding is how it shifts your role.

Instead of being someone who writes code line by line, you become someone who guides the system. Your job is to think clearly, define what you want, and refine the results.

This means the focus moves away from syntax and toward problem-solving.

In a way, coding becomes more creative. You are no longer limited by how fast you can type or how well you remember functions. Instead, your ability to think, design, and communicate becomes more important.

A Simple Example: Building Without Stress

Imagine you want to create a small website with a contact form.

Traditionally, you would:

- Write HTML for structure

- Add CSS for styling

- Use JavaScript for functionality

- Debug errors along the way

With vibe coding, the process feels lighter.

You might start by saying:

“Create a clean website with a header, a contact form, and a submit button.”

The AI generates the base structure.

Then you refine it:

“Make the design modern and responsive.”

Then again:

“Add validation to the form fields.”

Step by step, your idea evolves into a complete product—without the usual friction.

The Real Benefits (Beyond the Hype)

It’s easy to think of vibe coding as just a shortcut, but its impact goes deeper.

For beginners, it removes the fear of getting started. Instead of spending weeks learning basics before building anything, they can jump straight into creating.

For experienced developers, it acts like a productivity booster. Repetitive tasks, boilerplate code, and debugging can be handled faster, allowing more focus on architecture and innovation.

There is also a strong creative advantage. When the barrier to building is low, people experiment more. They try new ideas, test concepts quickly, and iterate faster.

But It’s Not Magic

Despite all its advantages, vibe coding is not a perfect solution.

AI can make mistakes. Sometimes the generated code is inefficient, incomplete, or simply wrong. When that happens, you still need a basic understanding of programming to fix the issue.

There is also the risk of over-dependence. If you rely entirely on AI without learning the fundamentals, you may struggle when something breaks or when you need to build more complex systems.

In other words, vibe coding is powerful—but it works best when combined with real knowledge.

The Skills That Still Matter

Even in this new era, some skills remain essential.

Understanding logic, knowing how applications work, and being able to debug problems are still important. What changes is how you apply these skills.

Instead of writing everything from scratch, you guide, review, and improve what the AI produces.

Think of it like using a calculator. It makes calculations faster, but you still need to understand math to use it correctly.

Real-World Impact: Who is Using Vibe Coding?

Vibe coding is not limited to one type of user.

Students are using it to build projects and learn faster. Entrepreneurs are creating prototypes without hiring large development teams. Freelancers are completing projects more efficiently and taking on more clients.

Even professional developers are adopting it as part of their workflow.

This wide adoption is a clear sign that vibe coding is not just a trend—it’s becoming a standard approach.

Can You Actually Make Money With It?

Yes, and this is where things become very practical.

Because vibe coding speeds up development, it allows individuals to create and deliver projects quickly. This opens multiple earning opportunities.

You can build websites for small businesses, create automation tools, develop simple applications, or even launch your own digital products.

For example, a basic business website that might have taken days to build can now be completed in hours. That efficiency directly translates into income potential.

What the Future Looks Like

Looking ahead, vibe coding is likely to become even more advanced.

AI tools will get better at understanding context, generating accurate code, and handling complex systems. The interaction between humans and machines will become more natural—almost like a conversation.

At the same time, the role of developers will continue to evolve.

Instead of focusing on writing every detail, they will focus on designing systems, solving problems, and making strategic decisions.

Common Mistakes to Avoid

- Relying fully on AI without understanding

- Writing vague prompts

- Ignoring errors

- Not testing code

- Skipping basics

Final Thoughts: A Shift You Shouldn’t Ignore

Vibe coding is not about replacing programmers. It’s about changing how programming works.

It lowers the barrier to entry, increases speed, and allows more people to turn their ideas into reality.

But like any powerful tool, it requires the right approach. The best results come when you combine AI assistance with your own understanding and creativity.

If you’re someone who wants to build, create, or even earn online, this is the perfect time to start exploring it.

Because in this new era, coding is no longer just about writing instructions for machines.

It’s about expressing ideas—and letting technology bring them to life.—

If you want to go deeper:

- Start practicing vibe coding today

- Build your first project

- Combine it with AI learning

And if you’re serious about mastering AI and building real-world applications, consider learning step-by-step through a structured course.