Introduction: AI Is Learning to Act, Not Just Respond

Artificial intelligence has come a long way in a short time. Not long ago, AI systems were mainly used to answer questions, generate content, or automate simple tasks. They were powerful, but they always depended on human input.

Now, a new evolution is changing that dynamic.

We are entering the age of Agentic AI, where AI systems don’t just respond—they act, plan, and execute tasks independently. Instead of waiting for instructions at every step, they can take a goal and work toward completing it.

This shift is subtle on the surface, but its impact is massive. It changes how software works, how businesses operate, and how individuals can create value.

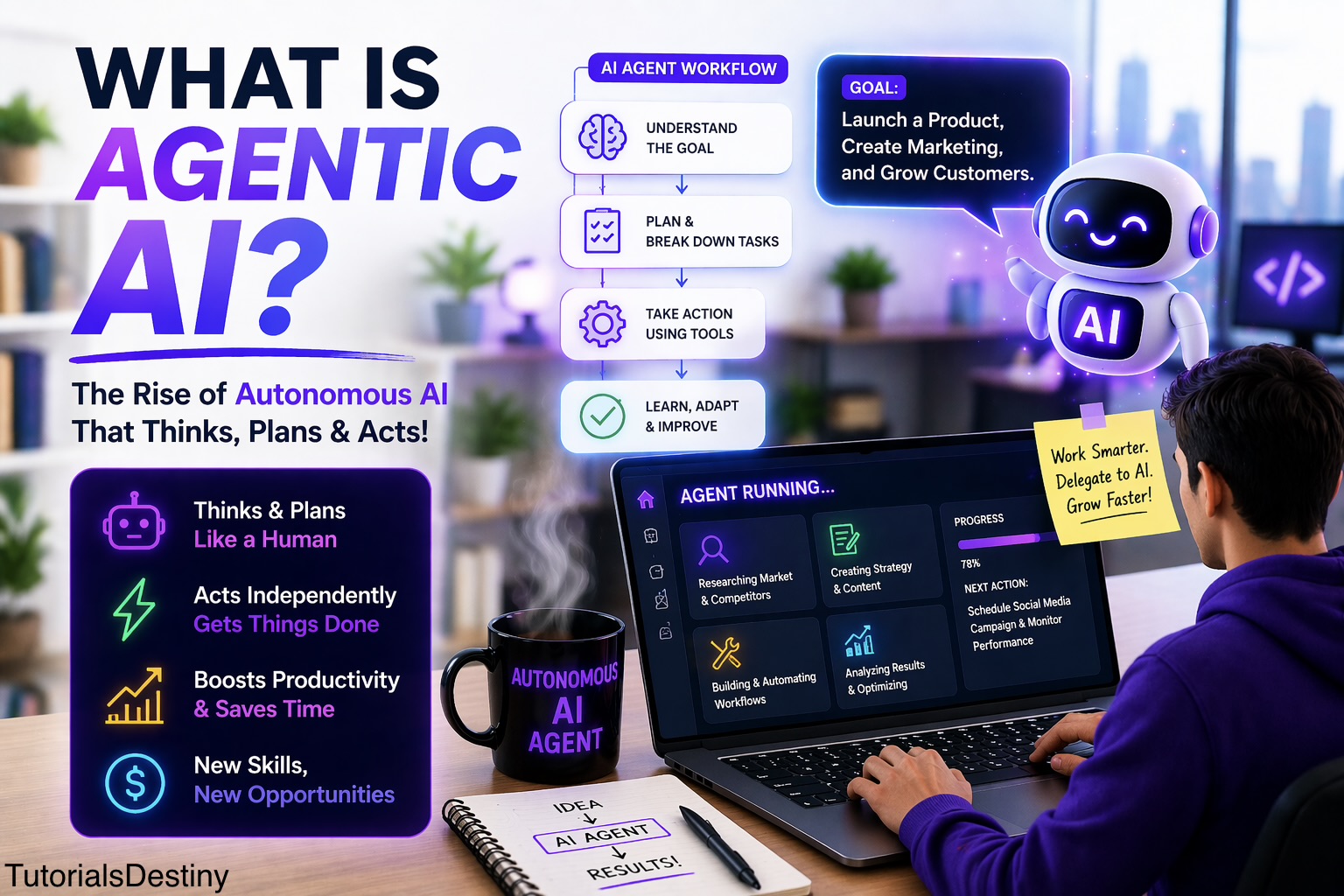

What is Agentic AI?

Agentic AI refers to artificial intelligence systems designed to function as autonomous agents. These systems are capable of understanding a goal, breaking it into steps, and taking action without needing constant human guidance.

In simple terms:

Agentic AI is AI that can think, plan, and act on its own to achieve a goal.

Instead of asking AI:

“Write a marketing email”

You might say:

“Help me launch a product and attract customers.”

An agentic system will not stop at one output. It will:

- Analyze your product

- Identify your target audience

- Create marketing strategies

- Generate content

- Suggest improvements

This ability to go beyond a single task is what makes it powerful.

How Agentic AI Works

To understand why Agentic AI feels so advanced, it helps to look at how it operates behind the scenes.

When you assign a goal, the system goes through a continuous cycle:

1. Understanding the Goal

It interprets what you actually want. Human instructions are often vague, so the AI must define the objective clearly.

2. Planning the Steps

The system breaks the goal into smaller tasks. This is similar to how a human would plan a project.

3. Taking Action

It executes tasks using tools, data, or generated outputs.

4. Evaluating Results

It checks whether the actions are working.

5. Adapting

If something fails, it adjusts its approach and tries again.

This loop allows the system to behave in a way that feels intelligent and purposeful rather than reactive.

Agentic AI vs Traditional AI

The difference between traditional AI and Agentic AI is best understood through behavior.

Traditional AI acts like a responsive assistant. It waits for commands and delivers outputs.

Agentic AI acts like a self-directed worker. It takes initiative and continues working toward a goal.

Key Differences:

- Traditional AI focuses on single tasks

- Agentic AI focuses on complete outcomes

- Traditional AI needs continuous input

- Agentic AI works with minimal supervision

- Traditional AI responds

- Agentic AI acts and adapts

This shift from reaction to action is what makes Agentic AI a major breakthrough.

Real-World Example: From Idea to Execution

Let’s make this practical.

Imagine you want to start an online business.

With traditional AI, you would:

- Ask for business ideas

- Generate content separately

- Create a website manually

- Plan marketing step by step

With Agentic AI, you could simply define a goal:

“Create and launch a small online business.”

The system could then:

- Research profitable niches

- Suggest a business model

- Generate a website structure

- Create product descriptions

- Draft marketing campaigns

Instead of assisting in parts, it contributes to the entire process.

Why Agentic AI is Gaining Attention

Agentic AI is not just another tech trend. It is gaining traction because it solves real problems.

First, it dramatically improves speed. Tasks that used to take days can now be initiated and completed much faster.

Second, it reduces effort. Instead of managing every detail, users can focus on defining goals.

Third, it increases accessibility. Even people without deep technical skills can build and execute complex workflows.

In short, it allows people to:

- Do more in less time

- Build without large teams

- Turn ideas into results faster

Benefits of Agentic AI

The advantages of Agentic AI become clearer when you see how it impacts real work.

Increased Productivity

AI agents can handle repetitive and time-consuming tasks, freeing up time for higher-level thinking.

Better Decision Support

They can analyze data and suggest actions, helping users make informed decisions.

Scalability

One system can manage multiple tasks simultaneously, something difficult for individuals.

Consistency

Unlike humans, AI systems do not get tired, which ensures steady performance.

Innovation

When execution becomes easier, people experiment more and explore new ideas.

Limitations and Challenges

Despite its strengths, Agentic AI is not without flaws.

One major challenge is accuracy. AI systems can sometimes generate incorrect results or follow flawed logic. Without proper oversight, this can lead to poor outcomes.

Another concern is control. Since these systems act autonomously, it becomes important to define boundaries and monitor actions.

Security is also a key issue. Giving AI access to tools and data requires careful handling to avoid misuse.

Finally, there is the risk of over-dependence. If users rely completely on AI without understanding the process, they may struggle when problems arise.

Skills You Need in the Age of Agentic AI

As AI becomes more autonomous, the skills required are evolving.

You don’t need to be an expert programmer, but you do need to think clearly and strategically.

Important Skills:

- Clear goal setting

- Prompt writing

- Critical thinking

- Basic technical understanding

- Ability to evaluate results

In this new environment, your role shifts from “doing everything” to guiding intelligent systems.

Agentic AI and Vibe Coding: How They Connect

If you’ve explored vibe coding, you already have a head start.

Vibe coding focuses on creating code using AI prompts. It helps you build applications faster without deep coding knowledge.

Agentic AI goes a step further.

Instead of just generating code, it can use that code to complete entire tasks or workflows.

Think of it this way:

- Vibe Coding = Building tools with AI

- Agentic AI = Using AI to run and manage those tools

Together, they form a powerful combination for creators and developers.

How to Get Started with Agentic AI

Getting started is easier than it might seem.

Begin by exploring AI tools that support automation and task execution. Start with simple goals, such as automating small workflows or generating structured outputs.

As you gain confidence, move toward more complex tasks like building systems that handle multiple steps.

The key is to experiment consistently. The more you use these systems, the better you understand how to guide them effectively.

How to Make Money Using Agentic AI

Agentic AI is not just about technology—it’s also about opportunity.

Because it improves efficiency, it opens new ways to earn.

You can use it to automate services for businesses, build digital products, or manage multiple projects simultaneously.

Popular Ways to Earn:

- Offering automation services to small businesses

- Building AI-powered tools or SaaS products

- Freelancing with faster delivery

- Creating and selling digital content

- Managing online businesses with minimal effort

For example, you could use Agentic AI to create and manage websites for clients, reducing the time required and increasing your earning potential.

The Future of Agentic AI

Looking ahead, Agentic AI is expected to become more advanced and more integrated into everyday workflows.

We may see systems where multiple AI agents collaborate, each handling different parts of a task. These systems could operate almost like digital teams.

At the same time, the interaction between humans and AI will become more natural. Instead of giving detailed instructions, users will focus on defining outcomes.

However, this growth will also bring challenges. Ethical concerns, regulations, and system reliability will become increasingly important.

Will Agentic AI Replace Humans?

This is a common concern, but the reality is more balanced.

Agentic AI will replace certain repetitive tasks, but it will also create new roles and opportunities.

Humans will continue to play a critical role in:

- Strategic thinking

- Creativity

- Leadership

- Ethical decision-making

Rather than replacing humans, Agentic AI is more likely to enhance human capabilities.

Final Thoughts

Agentic AI represents a major shift in how technology is used.

It moves us from a world where AI assists with tasks to one where AI can take initiative and drive outcomes.

For individuals, this means more power to build and create. For businesses, it means greater efficiency and scalability.

But success with Agentic AI depends on how well you use it. The better you define goals, guide systems, and evaluate results, the more value you can extract.

Conclusion

Agentic AI is not just another buzzword—it is a glimpse into the future of work.

By combining autonomy, intelligence, and adaptability, it is transforming how tasks are performed and how ideas are executed.

If you are willing to learn and experiment, this technology offers a powerful advantage.

Because the future is not just about using AI tools.

It’s about working with systems that can think, act, and evolve alongside you.